The standard approach to technical due diligence: hire a consultant, they interview the CTO for three days, produce a PDF, everyone moves on. Here's what that misses.

Technical due diligence has a format problem. The process was designed for a world where technology was a cost center and the question was simple: does it work? That question isn't wrong. It's just incomplete.

Today, technology is the business. The platform is the product. The architecture determines what you can build next and how fast you can build it. And the standard DD process hasn't caught up.

The interview problem

Traditional DD is interview-based. A consultant spends two or three days with the CTO and senior engineers. They ask about the architecture, the tech stack, the team structure, the deployment process. They write up what they heard.

The problem: people present the architecture they aspire to, not the one they have. Not out of malice. Out of optimism. Every CTO has a mental model of what the system should look like. That model diverges from reality in ways that are hard to see from inside.

Interviews also suffer from selection bias. You talk to the people who are available and willing. The engineer who's been quietly maintaining a critical legacy system for five years rarely gets invited to the DD sessions. Their knowledge doesn't make it into the report.

What gets missed

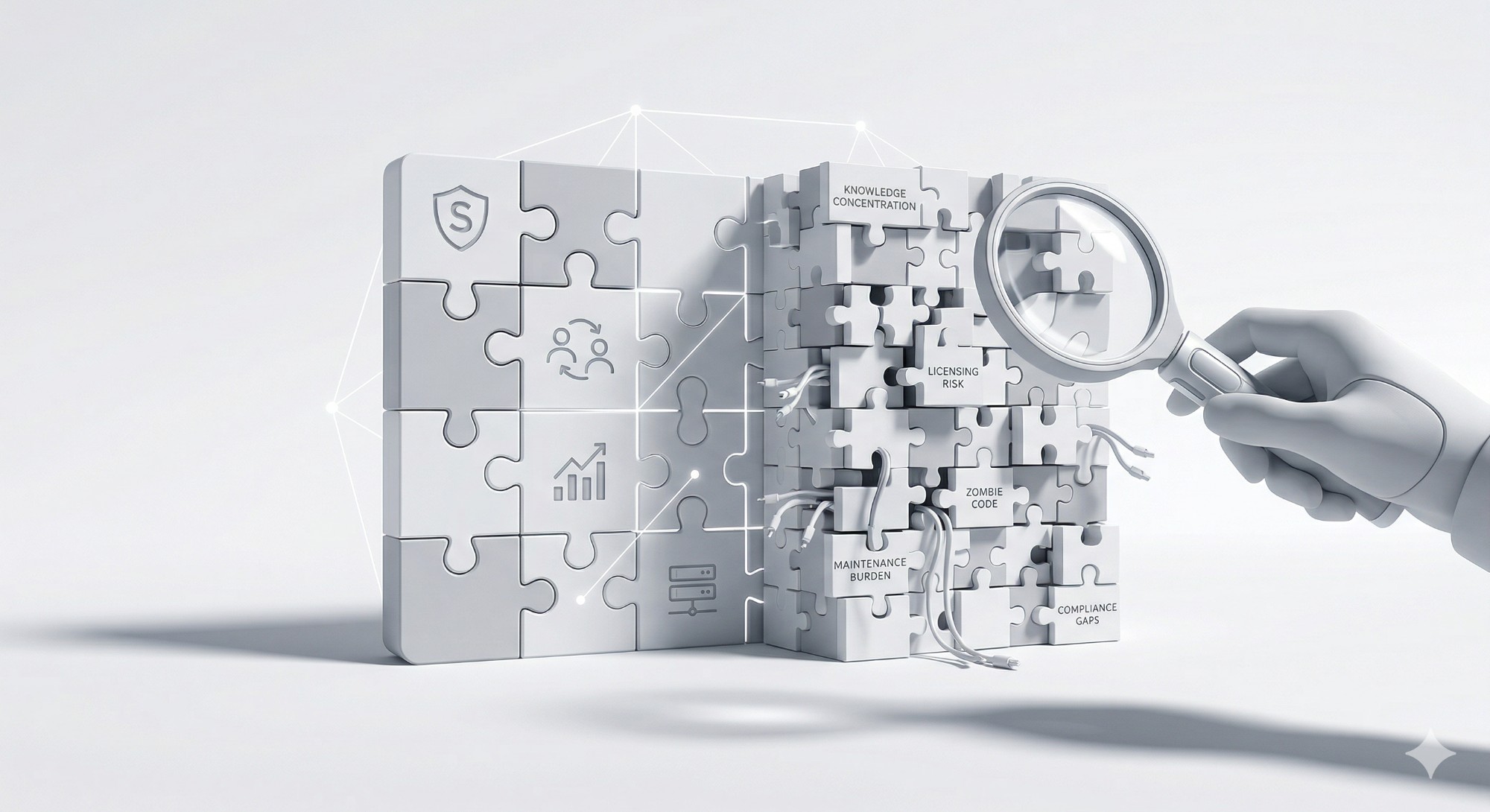

Here's what standard DD consistently fails to surface:

- Knowledge concentration risk Who actually maintains the critical systems? Is it one person or five? Standard DD asks about team structure. It doesn't analyze git history to see who touches what. The difference between the org chart and the commit log is often the most important finding in an assessment.

- Open-source licensing exposure Most modern software depends on hundreds of open-source packages. Some of those packages carry licenses that create real legal exposure. Copyleft licenses in commercial software. Licenses that require attribution that isn't being provided. Dependencies with known vulnerabilities that haven't been patched. Standard DD checks the tech stack. It doesn't audit the dependency tree.

- Zombie code and architecture drift Every codebase accumulates dead code. Features that were built and abandoned. Modules that were replaced but never removed. Configuration for infrastructure that no longer exists. This isn't just clutter. It's a maintenance burden that slows the entire team. Standard DD asks about the architecture. It doesn't measure how much of the codebase is actually in use.

- Compliance gaps SOC 2. HIPAA. PCI. GDPR. The CTO says they're compliant. Standard DD takes that at face value or checks for the certification. It doesn't look at whether the actual implementation matches the compliance requirements. Encryption at rest that's configured but not enforced. Audit logging that exists but has gaps. Access controls that are documented but not implemented.

- The real maintenance burden What percentage of engineering time goes to keeping the lights on versus building new capability? Standard DD asks the CTO. The CTO estimates. The estimate is almost always optimistic. Commit history and ticket data tell a different story. When 70% of engineering effort goes to maintenance, that changes the deal math.

The $5M surprise

Here's a scenario we've seen more than once. A company acquires a technology platform. The DD process checked the boxes. Architecture review, team assessment, security questionnaire. Everything looked reasonable.

Six months post-close, the new owners discover that the platform needs a fundamental rewrite. The architecture can't support the growth plan. The tech debt is deeper than anyone disclosed. The compliance gaps require significant investment to close.

The cost: $5M or more in unplanned engineering spend. A roadmap that's delayed by 18 months. A deal thesis that no longer pencils.

This isn't a failure of intent. It's a failure of method. Interview-based DD can't surface what interviews can't see.

The shift: from checkbox to evidence

Good DD reads the code, not just talks to people. People forget. People are optimistic. People present the version of reality they believe in. Code doesn't have opinions. Commit history doesn't have aspirations. Dependency manifests don't exaggerate.

Evidence-based DD means: risk mapped to business impact, not adjectives. Compliance verified by framework, not by assertion. Strategic options presented with effort, timeline, and trade-offs. Not a checkbox report. An intelligence product.

What good DD looks like

The right question isn't "is the code clean?" The right question is: "Is the system doing what the business needs it to do? And can it keep doing it as the business grows?"

It starts with the code

Static analysis. Dependency auditing. Architecture mapping from the actual codebase, not from a slide deck. This is the foundation. Everything else builds on it.

It maps the people to the systems

Who built what. Who maintains what. Where knowledge is concentrated and where it's distributed. This is the risk layer that interview-based DD misses entirely.

It quantifies the debt

Not "there's some tech debt" but "here's what it would cost to address it, here's the risk of not addressing it, and here's the timeline." Decision-grade information, not hand-waving.

It connects technology to business outcomes

Can this platform support 10x growth? What would need to change? What's the cost and timeline? These are the questions that matter for deal math, and they require both technical depth and business context.

"DD should answer one question: given what we now know about this technology, does the deal still make sense? Everything else is noise."

The governance lens

Due diligence isn't just for M&A. The same gaps exist in ongoing governance. Boards that rely on the CTO's quarterly update have the same blind spots as acquirers who rely on interview-based DD.

The question is always the same: is the technology aligned with the business? Is the investment producing the expected return? Are there risks that aren't visible from the boardroom?

Evidence-based assessment answers these questions. Not once, during a transaction. Continuously, as the business evolves.

We give business leaders an independent view of whether their technology is aligned to their business. Not opinions. Evidence. Not a checkbox. A clear picture of risk, exposure, liability, and strategic options.